Ruthlessly Helpful

Stephen Ritchie's offerings of ruthlessly helpful software engineering practices.

Keep Your Privates Private

Posted by on March 2, 2012

Often I am asked variations on this question: Should I unit test private methods?

The Visual Studio Team Test blog describes the Publicize testing technique in Visual Studio as one way to unit test private methods. There are other methods.

As a rule of thumb: Do not unit test private methods.

Encapsulation

The concept of encapsulation means that a class’s internal state and behavior should remain “unpublished”. Any instance of that class is only manipulated through the exposed properties and methods.

The class “publishes” properties and methods by using the C# keywords: public, protected, and internal.

The one keyword that says “keep out” is private. Only the class itself needs to know about this property or method. Since any unit test ensures that the code works as intended, the idea of some outside code testing a private method is unconventional. A private method is not intended to be externally visible, even to test code.

However, the question goes deeper than unconventional. Is it unwise to unit test private methods?

Yes. It is unwise to unit test private methods.

Brittle Unit Tests

When you refactor the code-under-test, and the private methods are significantly changed, then the test code testing private methods must be refactored. This inhibits the refactoring of the class-under-test.

It should be straightforward to refactor a class when no public properties or methods are impacted. Private properties and methods, because they are not intended to be directly called, should be allowed to freely and easily change. A lot of test code that directly calls private members causes headaches.

Avoid testing the internal semantics of a class. It is the published semantics that you want to test.

Zombie Code

Some dead code is only kept alive by the test methods that call it.

If only the public interface is tested, private methods are only called thorough public-method test coverage. Any private method or branch within the private method that cannot be reached through test coverage is dead code. Private method testing short-circuits this analysis.

Yes, these are my views on what might be a hot topic to some. There are other arguments, pro and con, many of which are covered in this article: http://www.codeproject.com/Articles/9715/How-to-Test-Private-and-Protected-methods-in-NET

Fake 555 Telephone Number Prefix and More

Posted by on February 28, 2012

Unless you want your automated tests to send a text message to one of your users, you ought to use a fake phone number. In the U.S., there is the “dummy” 555 phone exchange, often used for fictional phone numbers in the movies and television.

These fake phone numbers are very helpful. For example, use these fake numbers to test the data entry validation of telephone numbers through the user interface.

example.com, example.org, example.net, and example.edu

What about automated tests that verify email address validation? Try using firstname.lastname@example.com.

In all the testing that you do, select fake Internet data:

Fake URLs: http://www.example.com/default.aspx

Fake top-level domain (TLD) names: .test, .example, .invalid

Fake second-level domain names: example.com, example.org, example.net, example.edu

Fake host names: http://www.example.com, http://www.example.org, http://www.example.net, http://www.example.edu

Source: http://en.wikipedia.org/wiki/Example.com

Source: http://en.wikipedia.org/wiki/Top-level_domain#Reserved_domains

Fake Social Security Numbers

In the U.S., a Social Security number (SSN) is a nine-digit number issued to an individual by the Social Security Administration. Since the SSN is unique for every individual, it is personally identifiable information (PII), which warrants special handling. As a best practice, you do not want PII in your test data, scripts, or code.

There are special Social Security numbers which will never be allocated:

- Numbers with all zeros in any digit group (000-##-####, ###-00-####, ###-##-0000).

- Numbers of the form 666-##-####.

- Numbers from 987-65-4320 to 987-65-4329 are reserved for use in advertisements.

For many, changing all the SSNs to use the 987-00-xxxx works great, where xxxx is the original last four digits of the SSN. If duplicate SSNs are an issue then use the 666 prefix or 000 prefix (or use sequential numbers for the center digit group) as a way to resolve duplicates.

Source: http://en.wikipedia.org/wiki/Social_security_number#Valid_SSNs

More Fake Data

There are tons of sources of fake data out on the Internet. Here is one such place to start your search:

http://www.quicktestingtips.com/tips/category/test-data/

Rules for Commenting Code

Posted by on February 25, 2012

Unreadable code with comments is inadequate code with comments you cannot trust. Code that is well written rarely needs comments. Only comments that provide additional, necessary information are useful.

Yesterday a colleague of mine told me that he lost 10 points on a university assignment because he did not comment his code. Today I saw a photo with a list of rules for commenting attributed to Tim Ottinger.

Ottinger’s three rules make a lot of sense. These rules are straightforward. In my experience, they are correct and proper. Here are Ottinger’s Comment Rules:

1. Primary Rule

Comments are for things that cannot be expressed in code.

This is common sense. But, sadly, it is not common practice. Software is written in a programming language. A reader fluent in the programming language must understand the code. The code must be readable. It must clearly express what it is that the code does.

Only add comments when some important thing must be communicated to the reader, and that thing cannot be communicated by making the code any more readable. For example, a comment with a link to the MACRS depreciation method could be important because it helps explain the source of the algorithm.

2. Redundancy Rule

Comments which restate code must be deleted.

Any restatement of the code is unlikely to maintained over time. If the comment is maintained, then it just adds to the cost. More importantly, when comments are not maintained they either end up substantially misrepresenting the code or end up being ignored. Reading comments that misrepresent code is a waste of time, at best. At worst, they cause confusion or introduce bugs. Remove any comments that restate the code.

3. Single Truth Rule

If the comment says what the code could say, then the code must change to make the comment redundant.

Writing readable code is all about making sure that the compiler properly implements what the developer intended and making sure any competent developer can quickly and effectively understand the code. The code needs to do both: completely, correctly, and consistently. For example, a comment explaining that the variable x represents the principal amount of a loan violates the single truth rule. The variable ought to be named loanPrincipal. In this way the compiler uses the same variable to represent the same single true meaning that the human reader understands.

Tim Ottinger and Jeff Langr present more pragmatic guideance on when to write (and not write) comments: http://agileinaflash.blogspot.com/2009/04/rules-for-commenting.html

Now That’s High Praise

Posted by on February 19, 2012

As a pragmatist, hearing that a fellow developer is getting a lot of value from my book is exhilarating. Dominic Zukiewicz wrote an excellent review of Pro .NET Best Practices. Here is the link to Dominic’s blog post: How to implement best practices with the .NET Framework

I’m a big fan of Steve McConnell. I’ve read most of his books and read Rapid Development cover to cover. I consider it his seminal work. It is very high praise to be compared favorably to Rapid Development.

Thank you.

Four Ways to Fake Time, Part 4

Posted by on February 19, 2012

The is the fourth and final part in the Four Ways to Fake Time series. In Part 3 you learned how to use the IClock interface to improve testability. Using the IClock interface is very effective for new application development. However, when maintaining a legacy system adding a new parameter to a class constructor might be a strict no-no.

This part looks at how a mock isolation framework can help. The goal of isolation testing is to test the code-under-test in a way that is separate from dependencies and any underlying components or subsystems. This post looks at how to fake time using the product TypeMock Isolator.

Fake Time 4: Mock Isolation Framework

The primary benefit of a mock isolation framework is that no refactoring of the code-under-test is needed. In other words, you can test legacy code as it is, without having to improve its testability before writing maintainable test code. Here is the code-under-test:

using System;

using Lender.Slos.Utilities.Configuration;

namespace Lender.Slos.Financial

{

public class ModificationWindow

{

private readonly IModificationWindowSettings _settings;

public ModificationWindow(

IModificationWindowSettings settings)

{

_settings = settings;

}

public bool Allowed()

{

var now = DateTime.Now;

// Start date's month & day come from settings

var startDate = new DateTime(

now.Year,

_settings.StartMonth,

_settings.StartDay);

// End date is 1 month after the start date

var endDate = startDate.AddMonths(1);

if (now >= startDate &&

now < endDate)

{

return true;

}

return false;

}

}

}

With TypeMock, the magic happens in two ways. First, the test method arrangement uses the Isolate class to setup expectations. The test method sets up the DateTime.Now property so that it returns currentTime as its value. This fakes the Allowed method.

Here is the revised test code:

[TestCase(1)]

[TestCase(5)]

[TestCase(12)]

[Isolated] // This is a TypeMock attribute

public void Allowed_WhenCurrentDateIsInsideModificationWindow_ExpectTrue(

int startMonth)

{

// Arrange

var settings = new Mock<IModificationWindowSettings>();

settings

.SetupGet(e => e.StartMonth)

.Returns(startMonth);

settings

.SetupGet(e => e.StartDay)

.Returns(1);

var classUnderTest =

new ModificationWindow(settings.Object);

var currentTime = new DateTime(

DateTime.Now.Year,

startMonth,

13);

Isolate

.WhenCalled(() => DateTime.Now)

.WillReturn(currentTime); // Setup getter to return the test's clock

// Act

var result = classUnderTest.Allowed();

// Assert

Assert.AreEqual(true, result);

}

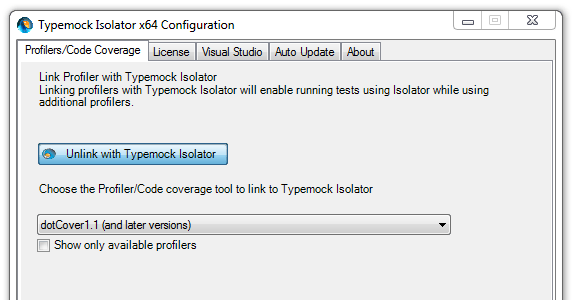

Second, the test must run in an isolated environment. This is how TypeMock fakes the behavior of System.DateTime; the test is running within the TypeMock environment. Here is the TypeMock Isolator configuration window.

The Cost of Isolation

Since TypeMock Isolator is a commercial product, be prepared to make the case for purchasing Typemock. Here is some information on the business case for TypeMock: http://www.typemock.com/typemock-newsletters/2011/3/7/typemock-newsletter-march-2011.html

I find that TypeMock Isolator 6.2.3.0 is well integrated with Visual Studio 2010 SP1, ReSharper 6.1 and dotCover 1.2.

In Chapter 2 of Pro .NET Best Practices, you learn about Microsoft Research and the Pex and Moles project. Moles is a Visual Studio power tool, and you will find the latest download through the Visual Studio Gallery. As I describe, the Moles framework allows Pex to test code in isolation so that Pex is able to automatically generate tests. Therefore, you can use Moles to write unit tests that fake time.

Moles, as a way to fake time, is described in the Channel 9 post Moles – Replace any .NET method with a delegate and the blog post Did you know Microsoft makes a mocking tool?.

Just like Code Contracts, I hope and expect that Microsoft will make Moles a more significant part of .NET and Visual Studio. Today, I don’t find that Moles offers the same level of integration (for now?) with ReSharper and dotCover that TypeMock has. When I use Moles, I run my test code within their isolation environment from the command line. It works, but I really do prefer using the ReSharper test runner.

To sum up the mock isolation framework approach:

Pros:

- Works well when applied to legacy or Brownfield code

- No impact on class-users and method-callers

- A system-wide approach

- Testability is greatly improved

Cons:

- Tests must run within an isolation environment

- Commercial isolation frameworks can be cost prohibitive

I hope you found this overview of four ways to fake time to be helpful. I certainly would appreciate hearing from you about any new, different, and hopefully better ways to fake time in coded testing.

Where’s CAT.NET 2.0?

Posted by on February 9, 2012

If you go to the Microsoft Security Development Lifecycle implementation page, you read about performing static analysis with CAT.NET. If you follow one of the download links it takes you to CAT.NET v1 CTP.

About a year ago the Beta version of CAT.NET 2.0 was out from the Microsoft Security Tools team. It looked very promising. Today, I am having trouble finding the download for CAT.NET 2.0. The link on the team’s CAT.NET 2.0 – Beta blog post is broken.

There is very little information on the Information Security Tools team’s Connect site.

Does Microsoft have an update on the Security Development Lifecycle tools?

Four Ways to Fake Time, Part 3

Posted by on February 7, 2012

In Part 2 of this four part series you learned how to use a class property to change the code’s dependency on the system clock to make the code easier to test. Adding the Now property is effective, however, adding a new property to every class isn’t always the best solution.

I don’t remember exactly when I first encountered the IClock interface. I do remember having to deal with the testability challenges of the system clock about 5 years ago. I was developing a scheduling module and needed to write tests that verified the code’s correctness. I think I learned about the IClock interface when I researched the MbUnit testing framework. At some point I read about IDateTime in Ben Hall’s blog or this article in ASP Alliance. I also read about FreezeClock in Ben’s post on xUnit.net extensions. Over time I collected the ideas and background that underlie this and similar approaches.

Fake Time 3: Inject The IClock Interface

I usually create a straightforward IClock interface within some utility or common assembly of the system. It becomes a low-level primitive of the system. In this post, I simplify the IClock interface just to keep the focus on the primary concept. Below I provide links to more detailed and elaborate designs. Without further ado, here is the basic IClock interface:

using System;

namespace Lender.Slos.Utilities.Clock

{

public interface IClock

{

DateTime Now { get; }

}

}

By using the IClock interface, the code in our example class is modified so that it has a dependency on the system clock through a new constructor parameter. Here is the rewritten code-under-test:

using System;

using Lender.Slos.Utilities.Clock;

using Lender.Slos.Utilities.Configuration;

namespace Lender.Slos.Financial

{

public class ModificationWindow

{

private readonly IClock _clock;

private readonly IModificationWindowSettings _settings;

public ModificationWindow(

IClock clock,

IModificationWindowSettings settings)

{

_clock = clock;

_settings = settings;

}

public bool Allowed()

{

var now = _clock.Now;

// Start date's month & day come from settings

var startDate = new DateTime(

now.Year,

_settings.StartMonth,

_settings.StartDay);

// End date is 1 month after the start date

var endDate = startDate.AddMonths(1);

if (now >= startDate &&

now < endDate)

{

return true;

}

return false;

}

}

}

Under non-test circumstances, the SystemClock class, which implements the IClock interface, is passed through the constructor. A very simple SystemClock class looks like this:

using System;

namespace Lender.Slos.Utilities.Clock

{

public class SystemClock : IClock

{

public DateTime Now

{

get { return DateTime.Now; }

}

}

}

For those of you who are using an IoC container, it should be clear how the appropriate implementation is injected into the constructor when this class is instantiated. I recommend you use constructor DI when using the IClock interface approach. For those following a Factory pattern, the factory class ought to supply a SystemClock instance when the factory method is called. If you’re not loosely coupling your dependencies (you ought to be) then you need to add another constructor that instantiates a new SystemClock, kind of like this:

public ModificationWindow(IModificationWindowSettings settings)

: this(new SystemClock(), settings)

{

}

In this post, we are most concerned about improving the testability of the code-under-test. The revised test method sets up the IClock.Now property so as to return currentTime as its value. This, in effect, fakes the Allowed method, and establishes a known value for the system clock. Here is the revised test code:

[TestCase(1)]

[TestCase(5)]

[TestCase(12)]

public void Allowed_WhenCurrentDateIsInsideModificationWindow_ExpectTrue(

int startMonth)

{

// Arrange

var settings = new Mock<IModificationWindowSettings>();

settings

.SetupGet(e => e.StartMonth)

.Returns(startMonth);

settings

.SetupGet(e => e.StartDay)

.Returns(1);

var currentTime = new DateTime(

DateTime.Now.Year,

startMonth,

13);

var clock = new Mock<IClock>();

clock

.SetupGet(e => e.Now)

.Returns(currentTime); // Setup getter to return the test's clock

var classUnderTest =

new ModificationWindow(

clock.Object,

settings.Object);

// Act

var result = classUnderTest.Allowed();

// Assert

Assert.AreEqual(true, result);

}

If you’re looking for more depth and detail, take a look at this very good post on the IClock interface by Al Gonzalez: http://algonzalez.tumblr.com/post/679028234/iclock-a-test-friendly-alternative-to-datetime

The Gallio/MbUnit testing framework has its own IClock interface. I don’t like production deployments containing testing framework assemblies; however, the Gallio approach offers a few ideas to enhance the IClock interface.

Pros:

- Works well with an IoC Container/Dependency Injection approach

- Can work with .NET Framework 2.0 and later

- No impact on class-users and method-callers

- A system-wide approach

- Testability is greatly improved

Cons:

- System-wide change, some risk

- Can be disruptive when applied to legacy or Brownfield applications

I often use this approach when working in Greenfield application development or when major refactoring is warranted.

In the next part of this Fake Time series we’ll look at a mock isolation framework approach.

.NET Developer’s Journal Book Review

Posted by on February 3, 2012

Tad Anderson wrote an excellent review of Pro .NET Best Practices in the .NET Developer’s Journal.

Here’s a link to Tad’s original blog post: Real World Software Architecture: Pro .NET Best Practices Book Review

Four Ways to Fake Time, Part 2

Posted by on January 31, 2012

In Part 1 of this four part series you learned how a code’s implicit dependency on the system clock can make the software difficult to test. The first post presented a very simple solution, pass in the clock as a method parameter. It is effective, however, adding a new parameter to every method of a class isn’t always the best solution.

Fake Time 2: Brute Force Property Injection

Here is a second way to fake time. It is brute force in the sense that it is rudimentary. Using full-blown dependency injection with an IoC container is left as an exercise for the reader. The goal of this post is to illustrate the principle and provide you with a technique you can use today.

Perhaps an example would be helpful …

using System;

using Lender.Slos.Utilities.Configuration;

namespace Lender.Slos.Financial

{

public class ModificationWindow

{

private readonly IModificationWindowSettings _settings;

public ModificationWindow(

IModificationWindowSettings settings)

{

_settings = settings;

}

// This property is for testing use only

private DateTime? _now;

public DateTime Now

{

get { return _now ?? DateTime.Now; }

internal set { _now = value; }

}

public bool Allowed()

{

var now = this.Now;

// Start date's month & day come from settings

var startDate = new DateTime(

now.Year,

_settings.StartMonth,

_settings.StartDay);

// End date is 1 month after the start date

var endDate = startDate.AddMonths(1);

if (now >= startDate &&

now < endDate)

{

return true;

}

return false;

}

}

}

In this example code, the Allowed method changed very little from how it was written at the end of the first post. The primary difference is that there isn’t any clock optional argument. The value of the now variable comes from the new class property named Now.

Let’s take a closer look at the Now property. First, it has a backing variable named _now, which is declared as a nullable DateTime. Second, since _now defaults to null, this means that the Now property getter will return System.DateTime.Now if the property is never set. In other words, if the Now property is never set then that property behaves like a call to System.DateTime.Now.

Note that the null coalescing operator (??) expression in the getter can be rewritten as follows:

get

{

return _now == null ? DateTime.Now : _now.Value;

}

And so, if our test code sets the Now property to a specific DateTime value then that property returns that DateTime value, instead of System.DateTime.Now. This allows the test code to “freeze the clock” before calling the method-under-test.

The following is the revised test method. It sets the Now property to the currentTime value at the end of the arrangement section. This, in effect, fakes the Allowed method, and establishes a known value for the clock.

[TestCase(1)]

[TestCase(5)]

[TestCase(12)]

public void Allowed_WhenCurrentDateIsInsideModificationWindow_ExpectTrue(

int startMonth)

{

// Arrange

var settings = new Mock<IModificationWindowSettings>();

settings

.SetupGet(e => e.StartMonth)

.Returns(startMonth);

settings

.SetupGet(e => e.StartDay)

.Returns(1);

var currentTime = new DateTime(

DateTime.Now.Year,

startMonth,

13);

var classUnderTest = new ModificationWindow(settings.Object);

classUnderTest.Now = currentTime; // Set the value of Now; freeze the clock

// Act

var result = classUnderTest.Allowed();

// Assert

Assert.AreEqual(true, result);

}

There is one more subtlety to mention. The test method cannot set the class-under-test’s Now property without being allowed access. This is accomplished by adding the following line to the end of the AssemblyInfo.cs file in the Lender.Slos.Financial project, which declares the class-under-test.

[assembly: InternalsVisibleTo("Tests.Unit.Lender.Slos.Financial")]

The use of InternalsVisibleTo establishes a friend assembly relationship.

Pros:

- A straightforward, KISS approach

- Can work with .NET Framework 2.0

- No impact on class-users and method-callers

- Isolated change, minimal risk

- Testability is greatly improved

Cons:

- Improves testability only one class at a time

- Adds a testing-use-only property to the class

I use this approach when working with legacy or Brownfield code. It is a minimally invasive technique.

In the next part of this Fake Time series we’ll look at the IClock interface and a constructor injection approach.

Four Ways to Fake Time

Posted by on January 26, 2012

Are your unit tests failing because the code-under-test is coupled to the system clock? In other words, does the method you are testing use the System.DateTime.Now property, and that dependency is making it hard to properly unit test the code?

This series of posts presents four ways to fake time as a means of improving testability. Specifically, we’ll look at these four techniques:

- The Optional ‘clock’ Parameter

- Brute Force Property Injection

- Inject The IClock Interface

- Mock Isolation Framework

Time Is Bumming Me Out

Time is so rigid. It keeps on ticking, ticking … into the future. The code becomes dependent on the system clock. That dependency makes it hard to properly test the code. Worse, the test code cannot find a lurking bug … until *boom* … the bug blows the system up.

Perhaps an example would be helpful …

public bool Allowed()

{

// Start date's month & day come from settings

var startDate = new DateTime(

DateTime.Now.Year,

_settings.StartMonth,

_settings.StartDay);

// End date is 1 month after the start date

var endDate = new DateTime(

DateTime.Now.Year,

_settings.StartMonth + 1, // This is the lurking bug!

_settings.StartMonth);

if (DateTime.Now >= startDate &&

DateTime.Now < endDate)

{

return true;

}

return false;

}

Notice the lurking bug in this code? Well, if the start month is December then a defect emerges at runtime. The error message you would see looks something like this:

System.ArgumentOutOfRangeException : Year, Month, and Day parameters describe an un-representable DateTime.

This defect is not too hard to notice, if you envision the value in the _settings.StartMonth property is 12. However, the problem with lurking bugs, they go unnoticed until … bang! At some future date (all too often in production) the software hiccups.

In the following code sample, the defect will be found when the continuous integration server runs the tests in December. This is a fairly typical unit testing approach, intended to test the Allowed method.

[Test]

public void Allowed_WhenCurrentDateIsOutsideModificationWindow_ExpectFalse()

{

// Arrange

var startMonth = DateTime.Now.Month;

var settings = new Mock<IModificationWindowSettings>();

settings

.SetupGet(e => e.StartMonth)

.Returns(startMonth + 1);

settings

.SetupGet(e => e.StartDay)

.Returns(1);

var classUnderTest = new ModificationWindow(settings.Object);

// Act

var result = classUnderTest.Allowed();

// Assert

Assert.AreEqual(false, result);

}

You don’t want to go into work on 1-Dec and find that this test is suddenly failing. The test method fails in December because that’s when startMonth is 12 and the defect is revealed. You’ll have a sickening thought, “Wait a second … is it failing in production?”

The production code is not working as intended, and worse than that, the test code never found the issue before the defect went into production.

If you revise the test code to include a few test cases, like the ones shown below, then the boundary conditions are covered and the defect is found every time these test cases are run. However, this test method has another flaw. It cannot pass for all three cases because the code-under-test has a dependency on DateTime.Now.

[TestCase(1)]

[TestCase(5)]

[TestCase(12)]

public void Allowed_WhenCurrentDateIsOutsideModificationWindow_ExpectFalse(

int startMonth)

{

// Arrange

var settings = new Mock<IModificationWindowSettings>();

settings

.SetupGet(e => e.StartMonth)

.Returns(startMonth + 1);

settings

.SetupGet(e => e.StartDay)

.Returns(1);

var classUnderTest = new ModificationWindow(settings.Object);

// Act

var result = classUnderTest.Allowed();

// Assert

Assert.AreEqual(false, result);

}

Before we investigate any further, let’s go back and revise the code-under-test to fix the bug. Here is an improved implementation of the Allowed method.

public bool Allowed()

{

// Start date's month & day come from settings

var startDate = new DateTime(

DateTime.Now.Year,

_settings.StartMonth,

_settings.StartDay);

// End date is 1 month after the start date

var endDate = startDate.AddMonths(1);

if (DateTime.Now >= startDate &&

DateTime.Now < endDate)

{

return true;

}

return false;

}

Imagine that today is Friday the 13th of January 2012. When we run the test cases the first case passes, but the other two fail. We want all three test cases to pass every time they are run.

Fake Time 1: The Optional ‘Clock’ Parameter

Let’s change the code-under-test so that time is provided as an optional parameter. This approach is made possible by .NET Framework 4.0, which allows C# developers to define optional parameters.

public bool Allowed(DateTime? clock)

{

var now = clock ?? DateTime.Now; // If no clock is provided then use Now.

// Start date's month & day come from settings

var startDate = new DateTime(

now.Year,

_settings.StartMonth,

_settings.StartDay);

// End date is 1 month after the start date

var endDate = startDate.AddMonths(1);

if (now >= startDate &&

now < endDate)

{

return true;

}

return false;

}

Now that the code-under-test accepts this ‘clock’ parameter, the test method is modified, as follows. All three test cases now pass.

[TestCase(1)]

[TestCase(5)]

[TestCase(12)]

public void Allowed_WhenCurrentDateIsInsideModificationWindow_ExpectTrue(

int startMonth)

{

// Arrange

var settings = new Mock<IModificationWindowSettings>();

settings

.SetupGet(e => e.StartMonth)

.Returns(startMonth);

settings

.SetupGet(e => e.StartDay)

.Returns(1);

var currentTime = new DateTime(

DateTime.Now.Year,

startMonth,

13);

var classUnderTest = new ModificationWindow(settings.Object);

// Act

var result = classUnderTest.Allowed(currentTime);

// Assert

Assert.AreEqual(true, result);

}

Pros:

- The simplest thing that could possibly work; a KISS approach

- Minimal impact to method callers

- Isolated changes, lower risk

- Testability is greatly improved

Cons:

- Only works with .NET Framework 4

- Method callers are able to pass improper dates and times, invalidating the method’s expected behavior

- Improves testability only one method at a time

- Adds testing-use-only parameters to method signatures

I recommend this approach when working with legacy or Brownfield code, which has been brought up to .NET 4, and a minimally invasive, very isolated technique is indicated.

In the next part of this Fake Time series we’ll look at a brute force, property injection approach.